Key Takeaways

- Start from the decision: Write the question only after you know what you will do differently based on each answer.

- Make options MECE: Answer choices should be mutually exclusive and collectively exhaustive (plus "Other" when needed).

- Pick the right format: Single-select for one best answer; multi-select for "check all"; use Likert scale questions and rating scale questions for intensity.

- Reduce bias: Use neutral wording, balanced scales, and smart ordering/randomization to limit response bias.

- Report multi-select correctly: For checkboxes, show % of respondents selecting each option (not totals that add to 100%).

What are multiple choice questions in a survey?

Multiple choice questions (MCQs) are closed-ended survey questions that ask respondents to select from a predefined list of answer options. They are one of the most common question types because they are quick to answer, easy to code, and straightforward to summarize.

In practice, MCQs usually come in two core styles:

- Single-select: pick exactly one option (radio buttons).

- Multi-select: pick all that apply (checkboxes).

If you want a deeper overview of the multiple choice question format and how it compares to other question types, start there and then come back for the examples and build workflow below.

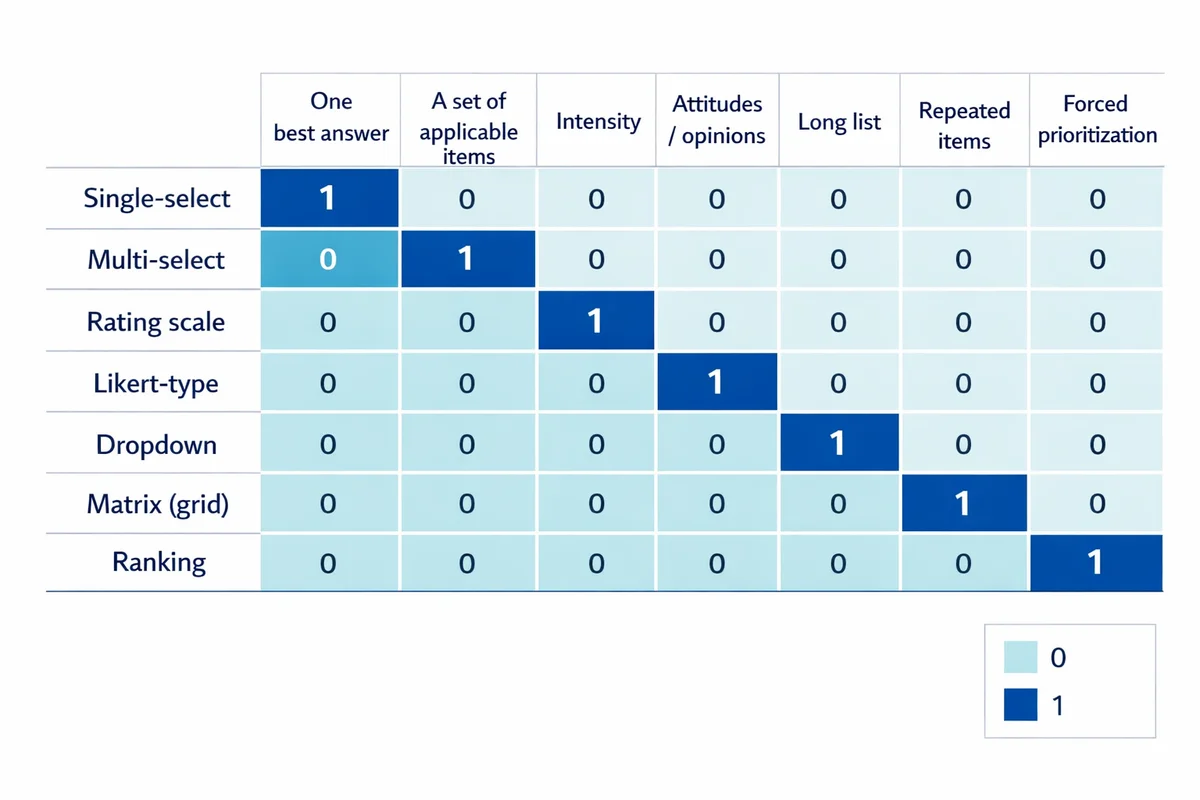

Multiple choice question types (and when to use each)

Most surveys reuse a small set of MCQ formats. The best format depends on what you need the respondent to do: choose one, choose many, rate intensity, rank priorities, or select from a long list.

| Type | Use when you need... | Watch out for... |

|---|---|---|

| Single-select (one answer) | One best/most accurate answer (e.g., primary reason, main channel). | Forcing a single pick when reality is multi-causal. |

| Multi-select (check all that apply) | A set of applicable items (e.g., features used, pain points experienced). | Harder analysis; options order can change selection behavior. |

| Rating scale | Intensity (satisfaction, ease, agreement). See scale design tips. | Unlabeled midpoints, inconsistent direction across questions. |

| Likert-type (worded scale) | Attitudes/opinions using consistent agree/disagree options. See Likert-type response options. | Acquiescence ("yea-saying") if statements are leading. |

| Dropdown | A long list (countries, locations, product SKUs) where scanning is burdensome. | Hidden options; mobile friction; increased missing data. |

| Matrix (grid) | Same response options repeated across multiple items (efficient for short item sets). | Straightlining and satisficing; keep matrices short. |

| Ranking | Forced prioritization (top needs, feature backlog). | High effort; avoid long lists and use when tradeoffs matter. |

If you will report a single headline number (e.g., "Top reason"), use single-select. If you will build a checklist of themes (e.g., "Top issues customers mention"), use multi-select.

Advantages and limitations (so you do not overuse MCQs)

MCQs are great for clean, scannable surveys, but they can also hide reality if you force respondents into the wrong bins.

| Advantages | Limitations |

|---|---|

| Fast to answer; low friction on mobile. | Time-consuming to design good options (the hard work is upfront). |

| Easy to analyze, compare, and trend over time. | Can miss unexpected answers unless you include "Other" or follow-ups. |

| Standardizes responses (less messy coding than free text). | Option order and wording can introduce measurement error and bias. |

| Works well with skip logic and segmentation. | Checkbox questions can produce ambiguous interpretation if not analyzed correctly. |

When you need discovery (new themes, new language, unknown options), consider starting with a small qualitative study or pairing MCQs with open-ended questions to capture what your list missed.

A simple workflow to design MCQ options from a research objective

Competitors usually jump straight to "write clear options." The bigger unlock is designing options that map cleanly to a decision.

Step 1: Write the decision you want to make

Example: "If customers cite pricing as the main barrier, we will test a lower-entry plan. If they cite missing features, we will prioritize the top two features."

Step 2: Translate the decision into a measurable construct

Here the construct is "primary barrier to purchase." This tells you the question should be single-select.

Step 3: Draft a candidate option list from evidence

Use what you already know: support tickets, sales call notes, site search queries, prior surveys, interviews. If you have no evidence, do 5-10 short interviews before you lock the list.

Step 4: Make the options MECE (no overlaps, no gaps)

Remove duplicates, merge synonyms, ensure categories do not overlap, and add "Other (please specify)" if you cannot be exhaustive.

Step 5: Add analysis labels before launch

Decide which options will be grouped in reporting (e.g., "Pricing" vs "Value" might be separate in wording but combined in dashboards). This prevents post-hoc recoding surprises.

If you cannot name the chart you will build from the question, pause. The best MCQs are designed backwards from the decision and the analysis.

How to write the question stem (wording best practices)

A strong stem is specific, neutral, and anchored to a timeframe. For a full set of survey question writing tips, use that guide as your checklist.

- Be specific about the situation: "Thinking about your most recent order" is clearer than "Thinking about our service."

- Use a timeframe: "In the past 30 days" avoids memory drift and mismatched interpretations.

- Avoid double-barreled stems: Do not ask about "price and quality" in one question.

- Keep it neutral: Replace "How helpful was our amazing support team?" with "How helpful was our support team?"

- Match the respondent's knowledge: Do not ask customers about internal policies or metrics they cannot observe.

Pew Research Center's guidance emphasizes clear, specific wording and avoiding hidden assumptions in survey questions. See: Writing survey questions.

How to build answer choices that reduce bias and improve data quality

Most data quality problems in MCQs come from option design: overlaps, missing categories, and response patterns caused by order or scale imbalance.

1) Keep options mutually exclusive (no overlaps)

Overlaps create unusable data because the "right" answer depends on the respondent's interpretation.

| Weak version | Improved version |

|---|---|

| What is your age? | What is your age range? 18-24 25-34 35-44 45-54 55-64 65+ Prefer not to say |

| How often do you use our app? | In the past 30 days, how often did you use our app? 0 times 1-2 times 3-5 times 6-10 times 11+ times |

2) Be exhaustive (or add "Other")

If you cannot confidently list all plausible options, add Other (please specify). Put it near the bottom, and use the open-text responses to improve the list next time.

3) How many answer options should you use?

There is no universal number, but research on multiple choice options often finds diminishing returns from long lists and supports using fewer, higher-quality options over many weak ones. A classic review discusses the "optimal number of options" tradeoff in MCQs (Vyas & Supe, 2008).

For surveys (not exams), a practical guideline is:

- Single-select: aim for 4-7 options when feasible.

- Multi-select: keep lists short; if you need 15+ items, consider grouping or using a follow-up question.

4) Prevent order effects and other response bias

Option order can nudge selections, especially in long lists. This is one form of response bias. Reduce it with:

- Randomize non-ordinal option lists (e.g., reasons, channels, features).

- Do not randomize inherently ordered lists (e.g., age ranges, frequency, "Strongly disagree" to "Strongly agree").

- Use a balanced scale for evaluative questions (equal negative and positive options when appropriate).

- Keep scale direction consistent across the survey to avoid misclicks.

Mode matters too: question behavior changes between mobile and desktop. The U.S. Census Bureau provides practical guidelines for questionnaire design across different modes (web, paper, etc.): Guidelines for designing questionnaires for administration in different modes.

When to add an open-ended follow-up (the "why" behind the pick)

MCQs tell you what respondents chose. When you need context, add a lightweight follow-up that is easy to answer.

Common, high-signal pairings:

- Barrier question (single-select) + why: "What is the main reason you did not complete your purchase?" then "What could we change to fix that?"

- Satisfaction rating + improvement prompt: "How satisfied are you?" then "What is the main thing we could do to improve your experience?"

- Multi-select issues + prioritize: "Which issues did you encounter? (Select all)" then "Which one mattered most?"

If the MCQ is required, consider making the open-text follow-up optional to reduce drop-off. You can still learn a lot from a smaller subset of thoughtful comments.

For more patterns and examples, see our guide to add an open-text follow-up without turning your survey into a wall of writing.

Multiple choice survey question examples (ready to copy)

Use these as starting templates. Adjust the timeframe, product names, and audience language to match your context. If you want broader inspiration beyond MCQs, browse our survey question examples bank.

Customer experience and product feedback

| Goal | Question (stem) | Suggested options / format |

|---|---|---|

| Measure satisfaction | Overall, how satisfied are you with your most recent experience? | 5-point satisfaction scale (Very dissatisfied to Very satisfied) |

| Identify drivers | Which part of your experience had the biggest impact on your satisfaction? | Single-select: Product quality; Delivery speed; Ease of use; Support; Price; Other (specify) |

| Find friction | Which issues, if any, did you run into? | Multi-select: Could not find info; Checkout issues; Slow site/app; Confusing pricing; Bug/error; None; Other |

| Improve onboarding | How easy was it to get started? | 7-point ease scale (Very difficult to Very easy) |

| Prioritize roadmap | Which one improvement would help you most? | Single-select from top 5-7 candidates + Other |

| Channel attribution | How did you first hear about us? | Single-select: Search; Social; Friend/colleague; Review site; Ad; Event; Other |

| Support quality | How would you rate the support you received? | 5-point rating scale + optional open-text "What could we do better?" |

| Retention risk | How likely are you to continue using us over the next 3 months? | 5-point likelihood scale (Very unlikely to Very likely) |

| Churn reason | What is the main reason you are considering leaving? | Single-select: Too expensive; Missing features; Hard to use; Switching to competitor; No longer needed; Other |

| Value perception | How would you describe the value for money? | 5-point value scale (Very poor value to Excellent value) |

If you are building a full CX questionnaire, you can also start from a template and customize: customer satisfaction survey template and satisfaction survey templates.

Employee and training surveys

| Goal | Question (stem) | Suggested options / format |

|---|---|---|

| Engagement driver | Which factor most affects your day-to-day engagement at work? | Single-select: Workload; Manager support; Role clarity; Growth; Compensation; Team culture; Other |

| Role clarity | I understand what is expected of me in my role. | Likert-type: Strongly disagree to Strongly agree |

| Manager support | How often do you receive useful feedback from your manager? | Frequency: Never; Rarely; Sometimes; Often; Very often |

| Training effectiveness | How useful was the training for your job? | 5-point usefulness scale + optional follow-up comment |

| Learning needs | Which topics would you like more training on? (Select all that apply) | Multi-select from a short list + Other |

| Process friction | Which processes slow you down most? | Multi-select: Approvals; Tools; Handoffs; Meetings; Rework; Context switching; Other |

| Retention signal | How likely are you to still be here in 12 months? | 5-point likelihood scale |

| Exit reason (optional) | What is the main reason you would consider leaving? | Single-select: Compensation; Career growth; Manager; Work-life balance; Role fit; Relocation; Other |

For full survey builds, see employee survey templates and employee engagement survey template.

Demographic multiple choice examples (with category design tips)

Demographic items are MCQs with strict requirements: categories should be mutually exclusive, collectively exhaustive, and sensitive to privacy. For a deeper set, see our guide on demographic questions.

| Question | Recommended options | Notes |

|---|---|---|

| What is your age range? | 18-24; 25-34; 35-44; 45-54; 55-64; 65+; Prefer not to say | Avoid overlapping ranges (e.g., 25-35 and 35-45). |

| Which region do you live in? | Use your actual service regions + Prefer not to say | Match how you will segment results. |

| What best describes your role? | IC/Contributor; People manager; Director+; Student; Retired; Other; Prefer not to say | Keep titles broad enough to reduce "Other" overflow. |

| How would you describe your organization size? | 1; 2-10; 11-50; 51-200; 201-1000; 1001+ | Make bins meaningful for your analysis (and sales model). |

How to analyze multiple choice survey results (single vs. multi-select)

MCQs are easy to chart, but only if you summarize them in a way that matches how people responded. For a broader walkthrough, see how to analyze survey data.

Single-select: counts, percent, and trends

For single-select questions, the standard outputs are:

- n (count) by option

- % of respondents by option (adds to ~100% excluding missing)

- Trend over time (if the question wording and option list stayed consistent)

If you plan to segment (for example, by region, role, or plan tier), confirm you have enough responses per segment to draw conclusions. Use this guide on sample size for surveys as a reality check before you over-interpret small groups.

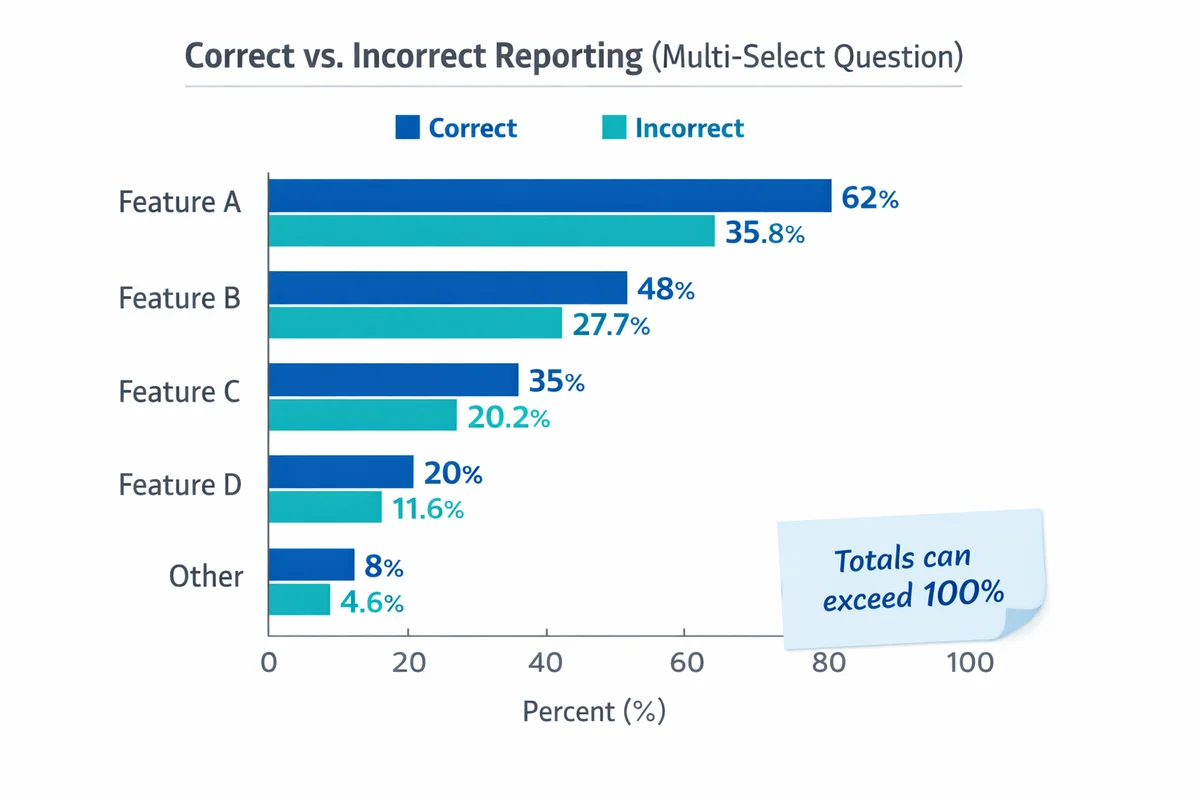

Multi-select (checkboxes): do not force it to 100%

Checkbox questions are multiple response data. Each option is essentially its own yes/no indicator. Good reporting includes:

- % of respondents selecting each option (each option can be 0-100%).

- Average number of selections per respondent (shows list breadth).

- Top combinations (optional): which pairs co-occur most often.

If 60% selected "Pricing" and 55% selected "Missing features," that does not mean 115% of respondents. It means many respondents selected both.

Ranking questions: summarize as "top choice" plus average rank

Ranking outputs can be confusing. Two practical summaries are:

- % who ranked each item #1 (easy headline)

- Average rank (lower is better), plus distribution if needed

Where MCQs fit in overall survey design

MCQs reduce respondent burden, but too many in a row can feel repetitive. In your survey design:

- Start with easy, concrete MCQs (experience, behavior), then move to evaluative items.

- Group related questions and reuse the same response scales to reduce cognitive load.

- Place demographics near the end unless they are needed for routing/skip logic.

Pre-launch quality check (10-minute MCQ review)

- Stem is specific: Clear timeframe, clear topic, no jargon.

- Options are MECE: No overlaps; no missing obvious category.

- One concept per question: Not double-barreled.

- "Other" is used intentionally: Included when needed; reviewed after the field period.

- Option order matches the risk: Randomize non-ordinal lists; do not randomize ordered scales.

- Scale labels are complete: Endpoints labeled at minimum; midpoint meaning is clear.

- Matrix grids are short: Avoid long grids that invite straightlining.

- Mobile check: Test tapping, scrolling, and dropdown usability on a phone.

- Missing data plan: Decide when questions are required and when "Not applicable" is needed.

- Analysis-ready coding: You can name the chart and the segmentation you will run.

References

- Pew Research Center. (n.d.). Writing survey questions.

- Martin, E., Childs, J. H., DeMaio, T., Hill, J., Reiser, C., Gerber, E., Styles, K., & Dillman, D. (2007). Guidelines for designing questionnaires for administration in different modes (RSM2007-42). U.S. Census Bureau.

- Vyas, R., & Supe, A. (2008). Multiple choice questions: a literature review on the optimal number of options. National Medical Journal of India, 21(3), 130-133.

Frequently Asked Questions

How many options should a multiple choice survey question have?

Use as few as you can while still covering realistic answers. For many survey MCQs, 4-7 options is workable. If your list keeps growing, consider grouping options, using a follow-up question, or adding an "Other" option. For background on the tradeoffs, see the review on option counts: Vyas & Supe (2008).

When should I randomize answer choices?

Randomize lists that have no natural order (reasons, channels, feature lists) to reduce order effects. Do not randomize ordered categories (age ranges, frequency scales, agreement scales) because order carries meaning. For more on bias patterns, see response bias.

Should I include "Other (please specify)"?

Include it when you cannot confidently cover all plausible answers, or when you expect local terminology you have not captured. Review "Other" write-ins after the field period and convert frequent themes into future options.

How do I analyze "select all that apply" questions?

Report each option as the percent of respondents who selected it (each option can be 0-100%). Add the average number of selections per respondent, and optionally the most common combinations. For more reporting patterns, see survey data analysis.

When is a dropdown better than radio buttons?

Use dropdowns for long lists where showing all options would be overwhelming (for example, a list of countries). For short lists (roughly 2-8 options), radio buttons are usually faster and reduce missed options, especially on mobile.

How do I write better multiple choice questions overall?

Start from the decision you need to make, then choose the simplest format that measures it. Write a neutral, specific stem, make options MECE, and test on mobile. If you need the respondent's reasoning, add a short optional follow-up using open-ended questions. Pew's guidance on clear wording is also a strong baseline: Pew Research Center.