Key Takeaways

- Closed-ended questions measure; open-ended questions explain: Use closed-ended for counts, trends, and benchmarks; use open-ended to uncover reasons, language, and surprises.

- Most surveys work best with a mix: A common pattern is a closed metric (rating, choice) followed by a targeted open follow-up ("What is the main reason?") for context.

- Closed-ended quality depends on the options: Missing choices, overlapping ranges, or biased labels can distort results more than you think.

- Open-ended quality depends on the prompt: Keep it specific, ask one thing, and tell respondents what kind of detail you want.

- Plan analysis before you ask open-text: If you cannot code and summarize responses consistently, limit open-ended items or ask them conditionally.

Open-ended and closed-ended questions both have a place in survey design. The difference is not just the response format. It is the kind of qualitative vs quantitative data you can produce, how much effort you ask of respondents, and how defensible your results are when someone asks, "How do you know?"

If you need a number you can track over time, start closed-ended. If you need to discover what you should measure (or why a score changed), add an open-ended question.

Open-ended vs closed-ended: definitions

Open-ended questions let respondents answer in their own words (free text). They are useful for discovery, explanation, and capturing the language people naturally use. See more open-ended questions and open-ended question examples.

Closed-ended questions provide predefined response options (a list or a scale). They are designed for fast completion and straightforward analysis. Common closed formats include multiple-choice questions, yes/no, and structured scales like Likert scale questions and rating scale questions.

Survey research guidance typically frames the choice around time constraints, what you already know about likely answers, and the level of detail needed. Those factors show up consistently in university and evaluation guidance on question formats (for example, St. Olaf College's overview and UCLA's teaching materials on open vs closed questions: Pew Research Center methodology guide on open vs closed-ended questions).

Side-by-side comparison (with trade-offs)

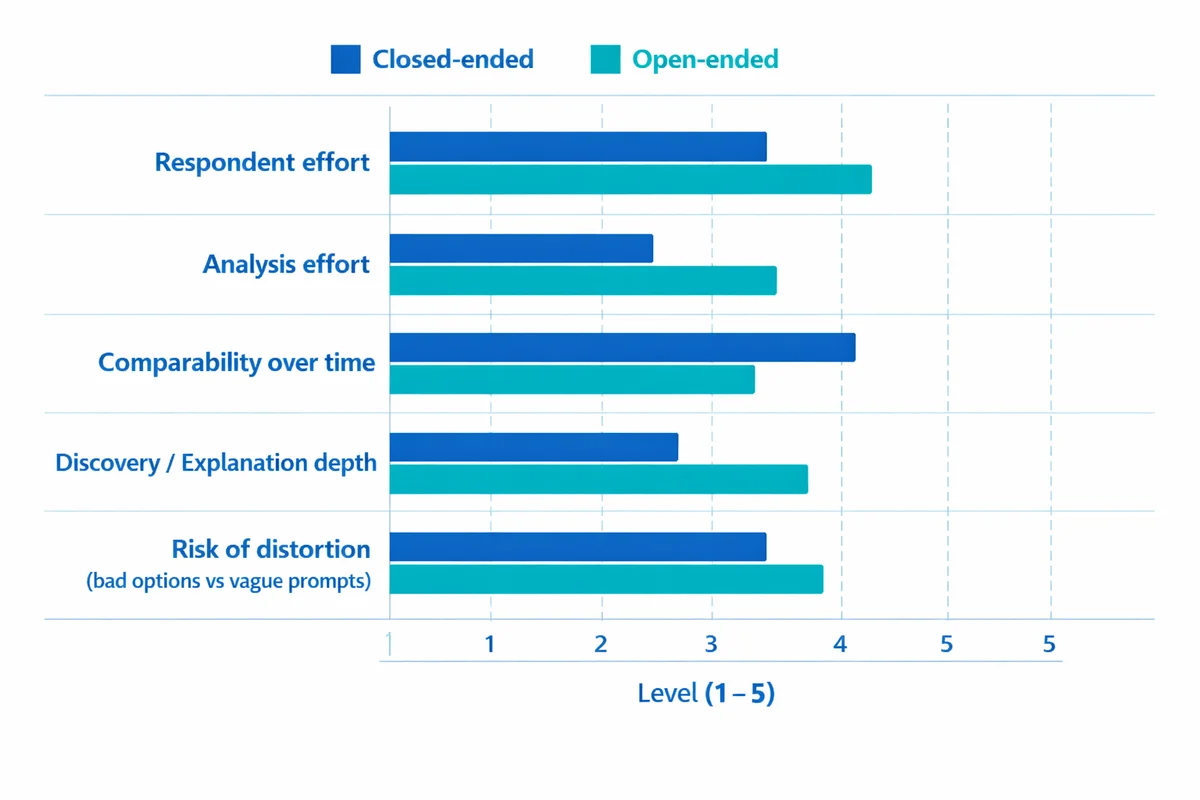

| Dimension | Closed-ended | Open-ended |

|---|---|---|

| What you get | Structured categories or numbers | Unstructured text (themes, examples, language) |

| Best for | Benchmarking, dashboards, segmentation, statistical tests | Exploration, "why" and "how," unexpected issues |

| Respondent effort | Low to moderate (fast) | Moderate to high (typing and thinking) |

| Analysis effort | Low (counts, averages) | Higher (coding, summarizing, text analysis) |

| Risk | Bad options can bias results or hide reality | Vague prompts produce vague answers; coding can drift |

| When it fails | When you do not know the answer options yet | When you need fast, comparable metrics at scale |

A key limitation of closed-ended questions is that your response options can shape what people report. This can create measurement error if options are incomplete, leading, or poorly labeled. That is one reason response bias and wording effects matter even when the question looks "objective."

Open-ended items can also introduce bias in a different way. They tend to advantage respondents who are more articulate or more motivated to type, which can skew what you hear. Peer-reviewed work comparing open and closed formats often finds that they do not behave identically, and they can produce different kinds of information even when they target the same concept (for example: Bleuel (Pepperdine), Information Differences Between Closed-ended and Open-ended Survey Questions).

When to use closed-ended questions

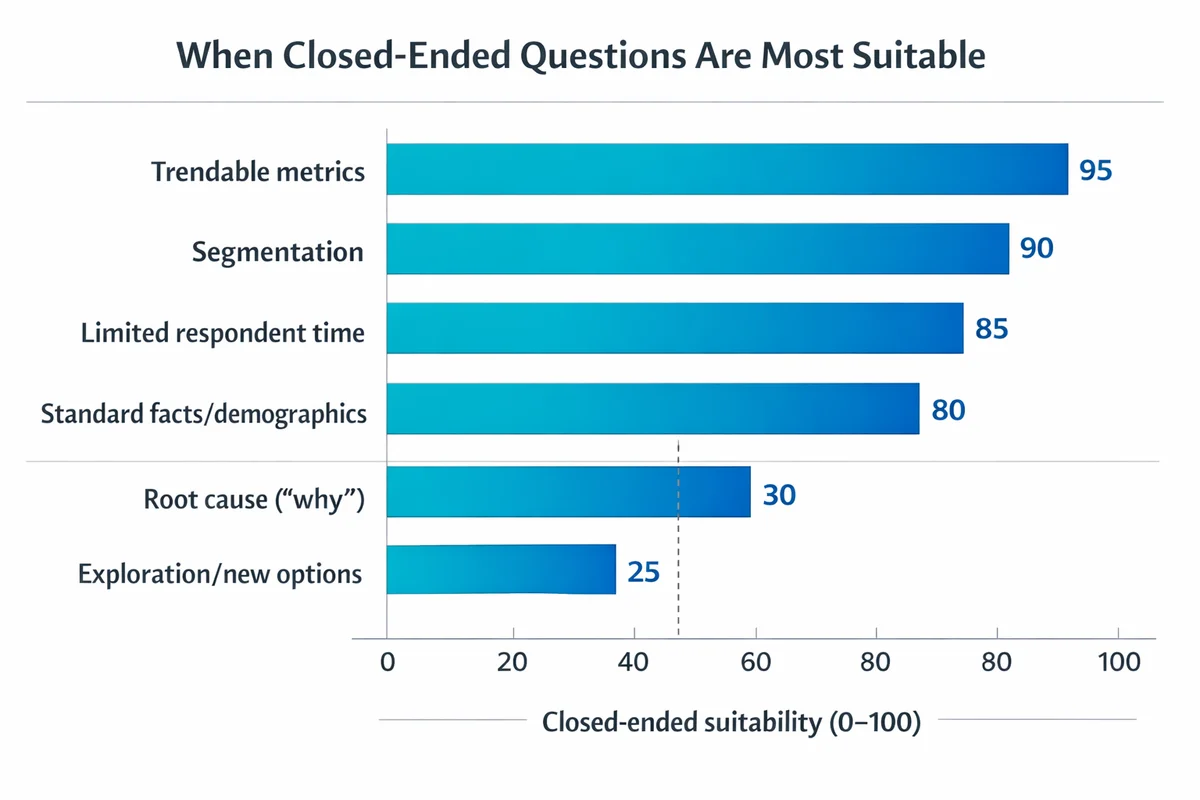

Choose closed-ended questions when you already have a good sense of the plausible answers and you need data that is consistent across respondents.

- You need trendable metrics: Satisfaction over time, NPS-style benchmarks, service KPIs, or goal tracking.

- You need segmentation: Comparing groups (region, plan type, tenure, role) requires consistent categories.

- You have limited respondent time: Short surveys, mobile contexts, or high-frequency pulses.

- You are collecting standard facts: Many demographic questions work best as closed-ended with standard categories (and a clear "Prefer not to say" when appropriate).

Even in demographics, format matters. Research comparing open vs closed demographic items has found differences in completeness and usability depending on the format and the audience (see, for example: Griffith et al. -- comparison of open vs closed questionnaire formats).

Closed-ended questions are also the right starting point when you must protect comparability across time, teams, or countries. If you change response options frequently, you lose the ability to interpret movement.

When to use open-ended questions

Use open-ended questions when you want to discover what is going on, not just measure how much of it exists.

- You do not know the options yet: Early-stage research, pilots, new products, new programs, new markets.

- You need root cause: Understanding why a rating is low (or why it dropped) requires language and detail.

- You want verbatims: Quotes for reports, examples for training, or stories that illustrate the human impact.

- You want to reduce assumption: Let respondents define what "good" or "frustrating" means in their context.

Open-ended questions work best when they are specific. "Any other comments?" usually produces either silence or a random mix that is hard to act on. A focused prompt ("What is the main reason for your rating?") typically yields more usable text.

A simple decision guide (pick, then mix)

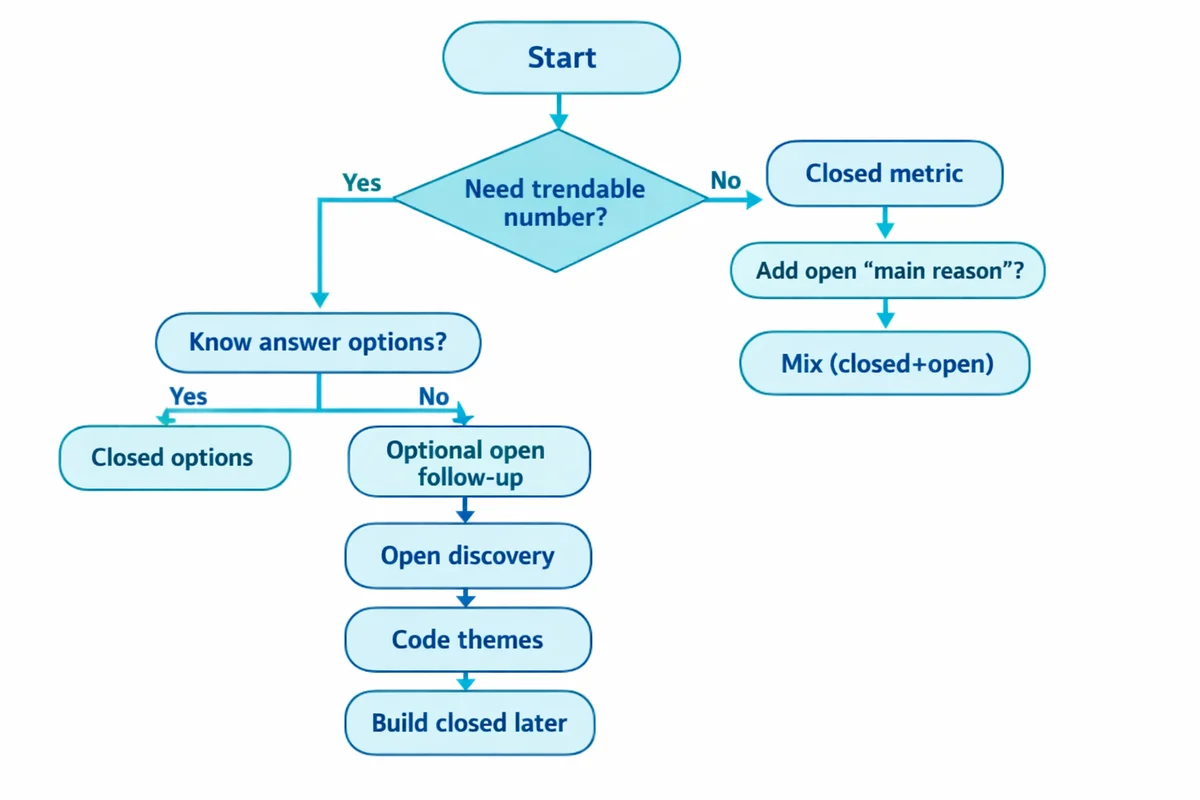

If you are deciding between open and closed, start by answering these three questions:

What decision will this answer drive?

If you need to compare teams, track change, or set targets, you need a closed-ended metric. If you need to understand what to fix, add open text.

Do you already know the plausible answers?

If yes, closed-ended is efficient. If not, start open-ended (or run a small pilot with open-text, then convert to closed options).

Can you actually analyze open-text at your expected volume?

If you will get 2,000 verbatims and no one owns coding, keep open-ended items limited or conditional.

Many teams ask open-ended questions because they feel "more honest," then end up summarizing them with a few cherry-picked quotes. If you cannot commit to a basic coding workflow, use fewer open questions and write them more tightly.

Also consider who you are surveying. The same question can behave differently depending on audience and mode (mobile vs desktop, anonymous vs named). Question format choices interact with sampling basics and representativeness: who responds, and how, shapes what you learn.

How to combine open and closed questions (3 proven patterns)

In practice, the strongest surveys use both formats intentionally, not randomly. Here are three patterns that reliably produce actionable data.

1) The "rating + why" follow-up

Ask a closed metric first, then a single open-ended follow-up that targets the cause.

- Closed: "Overall, how satisfied are you with the purchase experience?" (use 1-10 rating question or stars)

- Open: "What is the main reason for your score?"

This is a go-to approach for product feedback survey questions because it preserves a trackable KPI while capturing the language behind it.

2) Pilot then scale

Use open-ended questions in a pilot to discover the true answer options, then convert them into closed-ended response options for the full rollout.

- Phase 1 (small sample): "What problems did you run into during onboarding?" (open text)

- Phase 2 (full sample): "Which of these onboarding issues did you experience? (Select all that apply)" (closed list based on Phase 1 themes, plus "Other")

3) Conditional open-ended (ask only when needed)

Use skip logic so only a subset sees the open-text item (reducing burden while preserving depth where it matters).

- If satisfaction is 0-6: show "What could we change to improve your experience?"

- If satisfaction is 7-10: show "What should we keep doing?"

This pattern is especially useful in long programs like employee engagement survey questions, where too many free-text prompts can lower completion.

Writing better open and closed questions (without bias)

Good wording matters more than the format. If you want a deeper walkthrough, see how to write survey questions and related writing unbiased questions guidance.

Closed-ended: make options complete and usable

- Make choices mutually exclusive: Age ranges cannot overlap (18-24, 25-34, etc.).

- Make choices collectively exhaustive: Include "Not applicable" or "Other (please specify)" when needed.

- Label scales clearly: For agree/disagree scale items, keep labels balanced and consistent across questions.

- Avoid hidden assumptions: "How helpful was our chatbot?" assumes they used it. Ask usage first or include "I did not use it."

Open-ended: ask one thing and set the frame

University-based questionnaire guidance for open-ended items commonly recommends keeping prompts specific, using neutral wording, and avoiding multi-part prompts (see University of Florida guidance: https://edis.ifas.ufl.edu/publication/PD067).

- Ask for the "main" reason: It helps respondents focus and helps you code.

- Be concrete: "What part of the process slowed you down?" beats "Tell us your thoughts."

- Do not smuggle in blame: "What did you do wrong?" is not neutral.

- Place open-text where attention is highest: Early or right after a relevant closed metric.

Both formats can trigger leading questions and bias. With closed-ended items, the bias often comes from the options. With open-ended items, it often comes from framing ("What did you like about..." assumes they liked it).

Common pitfalls (and how to fix them)

- Pitfall: Too many open-ended questions.

Fix: Keep open-text to the few places where explanation is essential; use conditional open-ended follow-ups. - Pitfall: Closed lists that miss the real answers.

Fix: Pilot with open-text first, then build response options from real language; include "Other" when appropriate. - Pitfall: Double-barreled questions.

Fix: Separate ("How satisfied are you with speed?" and "...with accuracy?") so people are not forced to average their feelings. - Pitfall: Scales that invite acquiescence.

Fix: Avoid vague agree/disagree when a direct measure is possible ("How often..." or "How confident..."). - Pitfall: Treating open-text like "color" only.

Fix: Commit to a repeatable coding approach so themes can be counted and tracked.

How to analyze open-ended responses (a lightweight workflow)

Competitor articles often stop at "open-ended is harder to analyze." The good news is you can do a credible analysis without a PhD or a full qualitative study. The key is to be explicit and consistent.

Recent peer-reviewed work shows why combining open and closed data can be powerful, and how text analysis methods (including NLP) can help interpret open-text at scale (for example: Pew Research Center -- topic modeling for open-ended survey responses; Hansen & Swiderska (2024), Behavior Research Methods -- integrating open- and closed-ended responses).

Step 1: Decide what "counts" as an answer

Will you exclude blanks, "N/A," and one-word responses? Define this up front so your totals are interpretable.

Step 2: Build a codebook from a sample

Read 50-100 responses (or 10% of your data, whichever is smaller). Create 6-15 theme codes that match what you see, not what you expected.

Step 3: Code consistently (and allow multi-code)

Many comments include multiple issues. Decide whether you will allow multiple codes per response and how you will report totals (responses vs mentions).

Step 4: Quantify the themes

Count themes, then cross-tab them by key segments (team, region, plan). This turns text into action.

Step 5: Add representative quotes (not just the spicy ones)

Pick quotes that illustrate the most common themes and the highest-impact outliers. Make sure quotes match the quantified story.

Use it to accelerate clustering and summarization, not to replace judgment. Always spot-check themes against raw comments, especially for small subgroups where errors can mislead.

If you are collecting open-ended answers specifically to test the impact of misinformation, message effects, or similar constructs, be aware that open and closed question formats can behave differently in web-based settings (see: Connor Desai & Reimers (2018), Behavior Research Methods -- open vs closed questions in web research).

Examples you can copy (open vs closed pairs)

Below are practical pairs that show how the formats work together. The goal is not to choose one forever, but to choose the right tool for each job.

| Use case | Closed-ended (measure) | Open-ended (explain) |

|---|---|---|

| Product feedback | "How would you rate the product overall?" (1-10) | "What is the main reason for your score?" |

| Support interaction | "Was your issue resolved?" (Yes/No) | "If no, what was missing or unclear?" |

| Website friction | "What stopped you from completing checkout?" (Select one) | "What exactly happened when you tried?" |

| Employee engagement | "I see a path for growth here." (Strongly disagree to strongly agree) | "What would most improve your growth opportunities?" |

| Training evaluation | "How confident do you feel applying the skill?" (Not at all to Very) | "What part still feels hardest to apply?" |

| Demographics | "What is your role?" (Manager/IC/Executive/etc.) | "If other, please describe your role in a few words." |

Notice the pattern: closed questions create structure; open questions are targeted and conditional. That combination reduces respondent burden while preserving the context needed to act.

Closed questions are efficient, but they can restrict what respondents can tell you. Open questions can reveal the unexpected, but they are harder to process and compare.

Royal Academy of Engineering guidance on open vs closed questions

If you want ready-made survey layouts that already mix formats well, start with survey templates or browse a category like employee survey templates or satisfaction survey templates.

References

- Connor Desai & Reimers (2018), Behavior Research Methods -- open vs closed questions in web research

- Bleuel (Pepperdine), Information Differences Between Closed-ended and Open-ended Survey Questions

- Griffith et al. -- comparison of open vs closed questionnaire formats

- Pew Research Center -- topic modeling for open-ended survey responses

- Hansen & Swiderska (2024), Behavior Research Methods -- integrating open- and closed-ended responses

- Pew Research Center methodology guide on open vs closed-ended questions

- O'Leary, J. L., & Israel, G. D. (n.d.). The Savvy Survey #6b: Constructing Open-Ended Items for a Questionnaire (Publication PD067). University of Florida IFAS Extension.

- Royal Academy of Engineering. (n.d.). The difference between open-ended and closed questions.

Frequently Asked Questions

How many open-ended questions should a survey include?

As a starting point, include only the open-ended questions you can realistically analyze. Many general-purpose surveys do well with 1-3 targeted open-text items, typically placed after key closed metrics (or shown conditionally to specific groups). If open-text is your primary objective, design a shorter survey overall to offset the extra effort.

Are Likert scales and rating scales closed-ended questions?

Yes. Both Likert scale questions (e.g., strongly disagree to strongly agree) and rating scale questions (e.g., 1-10, stars) are closed-ended because respondents select from predefined options.

When should I add an "Other (please specify)" option?

Add "Other" when you cannot confidently list all plausible options, or when you expect meaningful edge cases (job roles, industries, tools used). Keep the open-text field short and specific ("Other role:") and review "Other" responses periodically to decide whether they should become a standard option.

Can I turn open-ended responses into quantitative results?

Yes. The standard approach is coding: define a set of themes and tag each response (or each mention) with one or more codes. Then you can report counts and percentages by theme and segment. For large volumes, text analysis methods can help accelerate grouping, but you should still validate themes against raw comments (see examples of combining open and closed data with NLP: Pew Research Center -- topic modeling for open-ended survey responses).

Which format is better for avoiding bias?

Neither format is automatically unbiased. Closed-ended questions can bias results through missing or leading options. Open-ended questions can bias results by favoring people who are more willing or able to write detailed responses. The best protection is neutral wording, balanced options, and a plan to detect response bias (for example, by monitoring "Other" text, checking for straight-lining on scales, and reviewing nonresponse patterns).