Key Takeaways

- Start with decisions: Every question should connect to a real analysis or action; delete the rest.

- Make questions answerable: Use simple wording, define terms, set a time frame, and ask about observable behavior when possible.

- Reduce bias on purpose: Keep wording and options neutral, avoid agree/disagree traps, and plan for response bias.

- Design answers for analysis: Use mutually exclusive and exhaustive options, label scales clearly, and keep scales consistent.

- Test before launch: Pretest for comprehension, pilot for data quality, then iterate before collecting your full sample.

What these principles do (and what they do not)

Questionnaire design is the craft of turning a measurement goal into questions people can answer accurately and consistently. Good design reduces misunderstandings, guessing, and avoidable drop-off.

These principles cannot fix the wrong audience. If you survey the wrong people, even perfect questions produce the wrong conclusions. (If you are still deciding who to survey, start with sampling for surveys.)

A question is well-designed when two different respondents with the same experience are likely to interpret it the same way and choose the same answer.

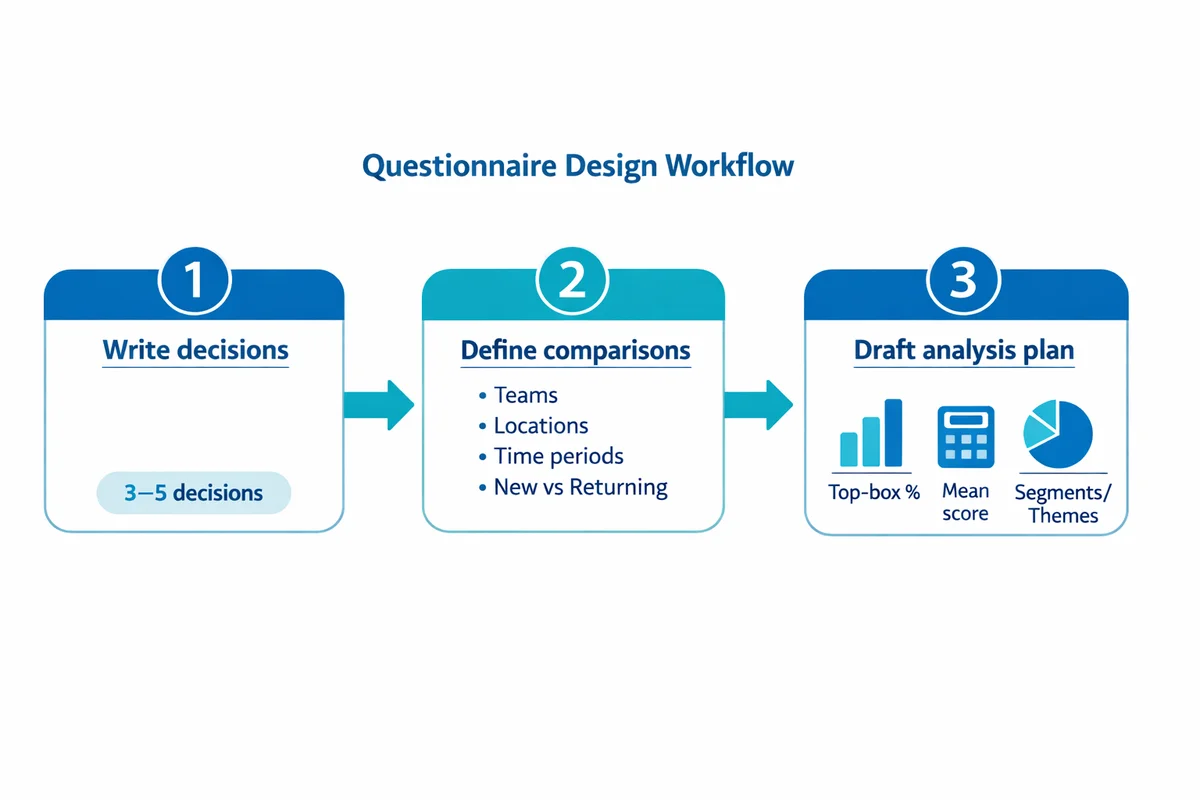

Principle 1: Start with purpose, decisions, and a draft analysis

The fastest way to improve a questionnaire is to make sure each item earns its place. Before you write, list the decisions the results will support (prioritize features, improve training, change policy, etc.).

Then sketch how you will analyze the data. If you cannot describe how an item will be used, it is a candidate for deletion or revision.

Write 3-5 decisions

Example: "Decide whether to extend support hours" or "Identify the top 2 drivers of churn."

Define what you must compare

Teams, locations, time periods, new vs. returning customers, etc. This influences wording and answer options.

Draft a one-page analysis plan

List the key outputs (top-box %, mean score, segments, verbatims themes). Questionnaire design should make those outputs easy.

Medical and clinical survey guidance often puts "decide what data you need" as the first step for a reason: it prevents scope creep and reduces respondent burden. See Stone (1993) for a concise checklist of questionnaire design steps (BMJ).

Principle 2: Write questions people can understand and answer

Clarity is not only about simple words. It is also about making recall possible, reducing ambiguity, and avoiding assumptions about what respondents know.

If you want a practical companion to this section, see our guide on how to write survey questions.

Use familiar language (and define terms you must use)

- Prefer common words: "help" beats "assistance"; "buy" beats "purchase."

- Define unavoidable terms: If you must use "incident" or "case," define it in-line.

- Avoid acronyms: If you cannot avoid them, spell out once, then reuse.

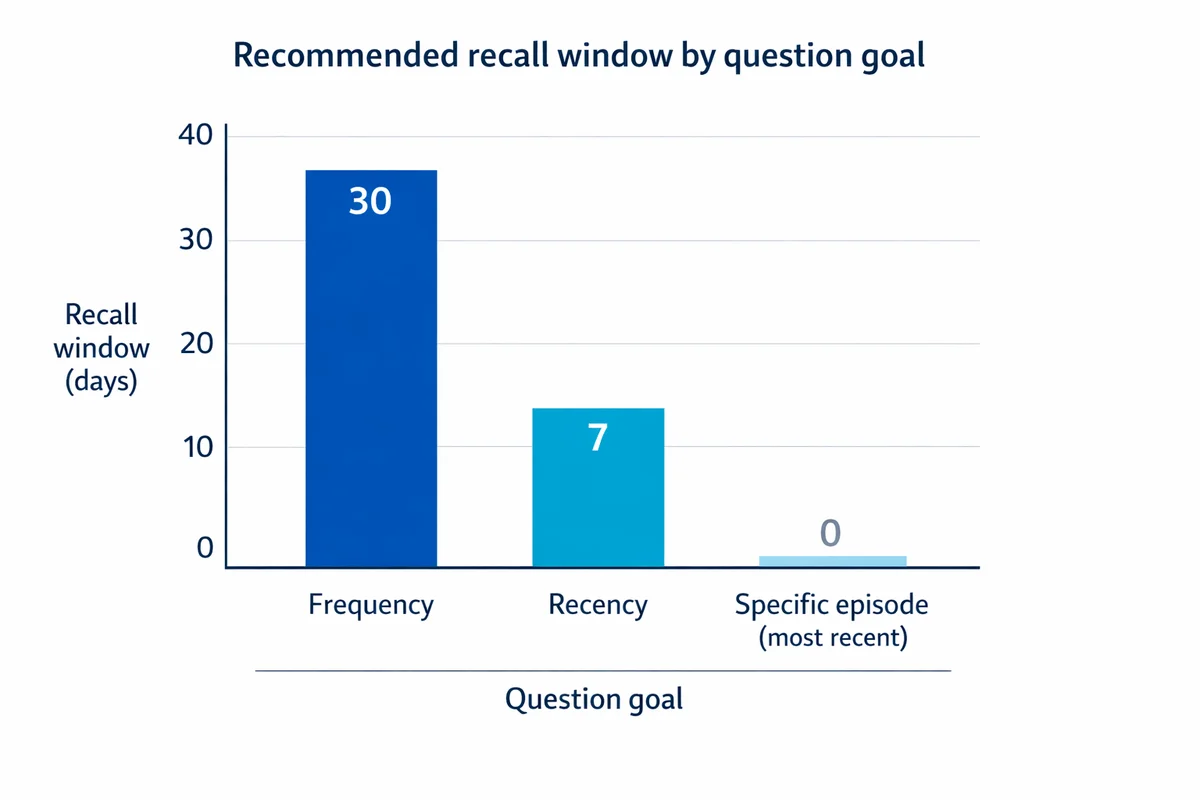

Make the recall task realistic

Many "bad" questions are really memory problems. Ask about a time period people can recall, and match the time period to the behavior.

| Goal | Hard to answer | Better |

|---|---|---|

| Frequency | "How often do you contact support?" | "In the past 30 days, how many times did you contact support?" |

| Recency | "Do you regularly exercise?" | "In the past 7 days, on how many days did you do at least 30 minutes of exercise?" |

| Specific episode | "Was onboarding smooth?" | "Thinking about your most recent onboarding, how easy was it to complete setup without help?" |

Survey design reviews in healthcare and social science repeatedly emphasize intelligibility and unambiguous wording as core criteria (Rattray and Jones, 2007: J Clin Nurs).

Ask about what respondents can reasonably know

Do not force respondents to guess internal processes, other people, or technical root causes.

Instead of: "Why did the delivery arrive late?"

Use: "Which of these happened with your most recent delivery? (Select all that apply)"

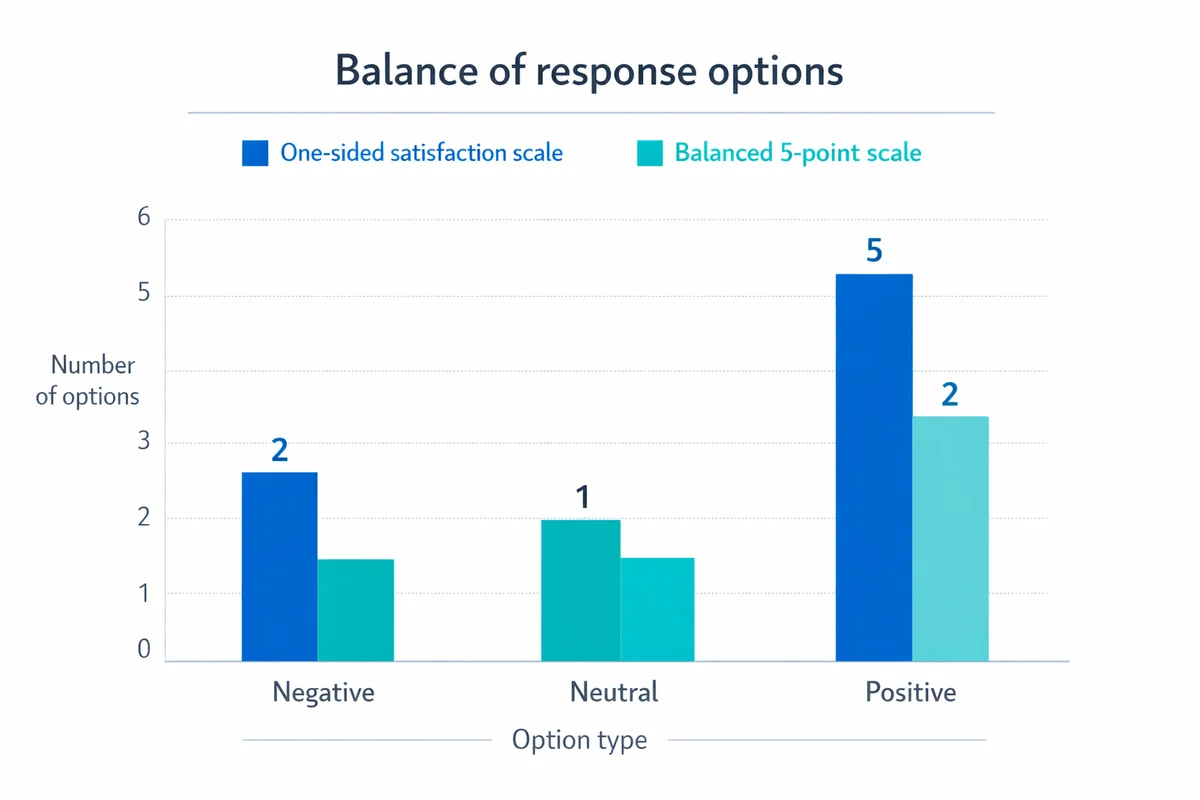

Principle 3: Keep wording and context neutral to reduce bias

Neutrality means you are not steering respondents toward an answer through wording, examples, or unbalanced options. This is one of the most direct ways to improve validity.

Bias can also come from the respondent, not just the questionnaire. Social desirability, acquiescence (yea-saying), and satisficing (rushing) are common sources of response bias.

Remove leading and loaded language

| Problem | Biased question | Neutral rewrite |

|---|---|---|

| Leading premise | "How helpful was our excellent support team?" | "How helpful was our support team?" |

| Emotionally loaded | "How frustrating was the signup process?" | "How easy or difficult was the signup process?" |

| One-sided options | "How satisfied are you?" (only positive choices) | Use a balanced scale with negative and positive options. |

Avoid agree/disagree when you can

Agree/disagree items invite acquiescence and can be harder to interpret. A more direct question about frequency, quality, or likelihood is usually clearer.

If you do use a Likert scale, write statements that are specific and observable, and label the scale clearly. Our survey maker allows you to edit scale labels after adding a Likert qeustion

"Knowledge is no substitute for carefully designed and tested questionnaires."

Stone, 1993 (BMJ)

Principle 4: Ask one idea at a time (avoid double-barreled questions)

Double-barreled questions mix multiple concepts, creating answers you cannot interpret. Respondents may agree with one part and disagree with the other, and you will not know which drove the response.

Common double-barrel patterns

- Two attributes: "easy and fast," "friendly and knowledgeable," "reliable and secure."

- Two time frames: "during setup and ongoing use."

- Two objects: "website and mobile app."

Bad vs. good rewrites

| Bad | Better (split) |

|---|---|

| "How satisfied are you with the price and quality?" | 1) "How satisfied are you with the quality?" 2) "How satisfied are you with the price?" |

| "Was the trainer engaging and clear?" | 1) "How engaging was the trainer?" 2) "How clear were the explanations?" |

Principle 5: Choose question types that match the data you need

Question type is a measurement decision, not a formatting preference. Pick formats that (1) respondents can answer, and (2) you can analyze reliably.

Closed-ended vs. open-ended

Closed-ended questions are easier to analyze and compare. Open-ended questions are better for uncovering language, reasons, and unexpected issues.

Use open-ended sparingly, and be specific about what you want. See our guides on open-ended questions and multiple-choice survey questions for practical patterns.

| If you need... | Prefer | Example |

|---|---|---|

| Benchmarks over time | Closed-ended rating | "Overall, how satisfied are you...?" |

| Prioritized improvements | Multiple choice + follow-up | "Which 1 change would help most?" + "Why?" |

| New issue discovery | Targeted open-ended | "What is the main reason you contacted support today?" |

| Eligibility for later items | Screeners / skip logic | "Have you used feature X in the last 30 days?" |

Practical survey design guides consistently recommend matching item format to the construct and planned analysis, and limiting open-ended items to reduce burden (Slattery et al., 2011: Otolaryngol Head Neck Surg).

Principle 6: Build answer options and scales you can trust

Many questionnaires fail in the answer choices, not the question stem. Aim for options that are mutually exclusive (no overlap) and exhaustive (cover realistic answers).

Multiple choice: mutually exclusive and exhaustive

For guidance and examples, see answer options best practices. Use these checks in any closed-ended list:

- No overlap: If you offer ranges, do not overlap (use 0-1, 2-3, 4-5 ... not 0-2, 2-4).

- Include "Other" when needed: Add "Other (please specify)" when you cannot list all plausible categories.

- Watch "Select all that apply": It increases effort and can reduce selection. Use only when truly necessary.

Rating scales: label clearly and keep them consistent

Rating scales are powerful but easy to misread. See rating scale design for deeper patterns.

| Design choice | Recommendation | Why it helps |

|---|---|---|

| Number of points | Use 5- or 7-point scales for attitudes | Balances sensitivity and respondent effort. |

| Labels | Label endpoints at minimum; label all points for high-stakes items | Reduces interpretation drift across respondents. |

| Direction | Keep direction consistent (e.g., low-to-high) | Reduces misclicks and straightlining. |

| Midpoint | Include a midpoint only if "neutral" is meaningful | Avoids forcing a direction when none exists. |

| N/A vs. neutral | Separate "Not applicable" from "Neither" | Prevents mixing ineligible respondents with truly neutral ones. |

When using agreement scales, follow Likert scale best practices and consider rewriting to direct questions to reduce acquiescence.

Principle 7: Use a logical flow, and write an introduction that earns trust

Respondents do better when the questionnaire feels like a coherent conversation: easy items first, complex items later, and sensitive items only after trust is built.

A practical ordering pattern

Warm-up

Easy, factual, or broadly applicable items that confirm the survey is relevant.

Core topic blocks

Group by topic, keep scales consistent within a block, and use short transitions.

Diagnostics

Reasons, obstacles, or follow-ups that explain the ratings.

Sensitive and demographics

Place later, and ask only what you will use. For patterns, see demographic questions.

Closing

Final open-ended "Anything else?" plus a thank you and next steps (if you can share them).

Intro template you can copy

Keep introductions short. Respondents want to start the survey, not read a page of text.

Purpose: We are collecting feedback to improve [process/product/service].

Time: This survey takes about [X] minutes.

Privacy: Your responses are [anonymous/confidential], and will be used for [who sees results].

Voluntary: You can skip any question you prefer not to answer.

Contact: Questions? Contact [name/email].

Ethical and validation-focused guidance typically includes clarity about purpose and participant rights as part of good questionnaire development (Boparai et al., 2018: Current Clinical Pharmacology).

Principle 8: Make it mobile-friendly and easy to complete

Most online questionnaires are completed on a phone at least some of the time. Design for small screens first, then for desktops.

For broader experience and layout guidance, see our overview on survey design.

Mobile-first layout rules

- Avoid big grids: Matrix questions are hard to scan on phones and increase straightlining.

- Keep options short: Long options wrap and become harder to compare.

- Use progressive disclosure: Show follow-ups only when relevant (skip logic) to cut clutter.

- Tap targets: Use radio buttons and buttons that are easy to tap, with enough spacing.

Reduce perceived effort

Respondents are more likely to finish when the survey feels quick and predictable.

- Keep scales consistent: Repeated re-learning slows people down.

- Use a progress indicator carefully: It helps when accurate; it hurts when it jumps backward due to skip logic.

Principle 9: Plan for coding and analysis while you write

Questionnaire design is also data engineering. If the data cannot be coded cleanly, the survey will create analysis work and uncertainty later.

Design for clean variables

- Keep constructs separate: If you want "speed" and "accuracy," measure them with separate items.

- Standardize time windows: Mixing "last week" and "last year" complicates comparisons.

- Pre-code whenever possible: Replace free text (e.g., "department") with a clean list when you need reporting.

Do not confuse pilot size with required sample size

A pilot is for finding problems; the full launch is for estimating results. Plan both.

For the full study, use our sample size planning guide. Even the best questionnaire cannot overcome too little data for the comparisons you need.

Principle 10: Pretest, pilot, and iterate

Pretesting is where most questionnaires improve. It catches misinterpretations you cannot see from the writer's chair.

Survey method guides consistently emphasize drafting, pretesting, piloting, and revising as a core development loop (Rattray and Jones, 2007: J Clin Nurs; Leite: University of Florida PDF).

What to do in a pretest

Read-aloud check

Have someone read each question out loud. Anything they stumble on is a rewrite candidate.

Interpretation probe

Ask: "What does this question mean to you?" and "How did you pick your answer?"

Timing and friction

Record completion time, drop-offs, and items that trigger "other" or blanks.

Revise and retest

One round is rarely enough for a brand-new instrument.

Pilot checks before full launch

- Option coverage: Are respondents overusing "Other" or "Prefer not to say"?

- Scale use: Are answers piling up at one end due to wording, not reality?

- Logic correctness: Do respondents see only relevant follow-ups?

For clinical trials, Edwards (2010) highlights that administration and respondent burden can affect data quality, reinforcing the need to test the full experience, not only the wording (Trials).

Common edge cases (and how to handle them)

"Don't know" and "Not sure"

Offer "Don't know" only when it is realistic and analytically meaningful. If you include it everywhere, you may encourage satisficing.

- Include when: The respondent may not have the information (e.g., "What plan are you on?").

- Exclude when: You are asking about the respondent's own experience (e.g., "How satisfied are you?").

"Not applicable" vs. "Neutral"

Do not use "Neither agree nor disagree" as a substitute for ineligibility. Add a true "Not applicable" option or use skip logic.

Sensitive items (income, health, performance issues)

Sensitive questions increase break-offs and misreporting when trust is low. Place them later, explain why you ask, and offer "Prefer not to answer" where appropriate.

If you collect personal data, align the questionnaire with your survey privacy commitments (who can see raw responses, retention, and protections).

Order effects and priming

Earlier questions can influence later answers. When you have long lists (brands, reasons, issues), consider randomizing option order, except when a natural order matters (e.g., frequency).

Translation and cultural fit

Avoid idioms and culture-specific references. If you translate, use back-translation or review by a native speaker who understands the topic, not just the language.

Questionnaire review checklist (quick pass)

- Purpose: Every item maps to a decision or analysis output.

- Clarity: Simple wording, defined terms, realistic recall period.

- One idea: No double-barreled stems or mixed time frames.

- Neutrality: No leading language; balanced options; avoid needless agree/disagree.

- Options: Mutually exclusive, exhaustive, and consistent formatting.

- Flow: Warm-up to core to sensitive; clear transitions; demographics placed intentionally.

- Mobile: No wide grids; short options; tested on a phone.

- Pretest: Someone outside the project interpreted items as intended.

Next steps: get a review, or start from proven templates

If you want feedback on a draft questionnaire (wording, scale choices, flow, or bias risks), use our survey help option.

If you are starting from scratch, templates can speed up structure and ordering. Browse Survey Templates and adapt items using the principles above.

References

- Stone, D. H. (1993). Design a questionnaire. BMJ, 307(6914), 1264-1266.

- Slattery, E. L., Voelker, C. C. J., Nussenbaum, B., Rich, J. T., Paniello, R. C., & Neely, J. G. (2011). A practical guide to surveys and questionnaires. Otolaryngology-Head and Neck Surgery, 144(6), 831-837.

- Rattray, J., & Jones, M. C. (2007). Essential elements of questionnaire design and development. Journal of Clinical Nursing, 16(2), 234-243.

- Boparai, J. K., Singh, S., & Kathuria, P. (2018). How to design and validate a questionnaire: A guide. Current Clinical Pharmacology, 13(4), 210-215.

- Leite, W. (n.d.). Principles for adequate questionnaire design [PDF]. University of Florida, College of Education.

- Edwards, P. (2010). Questionnaires in clinical trials: Guidelines for optimal design and administration. Trials, 11, 2.

Frequently Asked Questions

How many questions should a questionnaire have?

As few as possible to support your decisions and analysis. Instead of targeting a magic number, target a completion time that fits the context (often 3-8 minutes for customer surveys, longer only when respondents are highly motivated). Pretest timing with real people.

Should I include a neutral midpoint on rating scales?

Include a midpoint only when "neutral" is a meaningful attitude. If many respondents may be ineligible to judge, use "Not applicable" (or skip logic) instead of forcing them into the midpoint.

When should I add a "Don't know" option?

Add it when respondents may not have the information (plan type, dates, technical details). Avoid adding it to experience questions (satisfaction, ease) where respondents can usually answer, because it can become an easy escape.

Are open-ended questions "better" than rating questions?

They are better for discovery and detail, but harder to analyze consistently at scale. Many strong questionnaires use both: a small set of closed-ended items for measurement plus one or two targeted open-ended prompts for explanations.

What is the difference between a pretest and a pilot?

A pretest checks comprehension (what respondents think the question means). A pilot is a small live run that checks the full system: drop-offs, option coverage, scale behavior, and whether your planned analysis works on real data.